While I wanted to write next about how societal structures usually are dragged behind in the wake of disruptive technologies, a comment to my previous blog brought the issue of technological debt to my attention. Technological debt accrues from trouble-shooting rather than future-proofing a problem, and it needs to be repaid afterwards to avoid stalling operations. The ability to redeem technological debt depends on how a developer has anticipated technological progress and its implications.

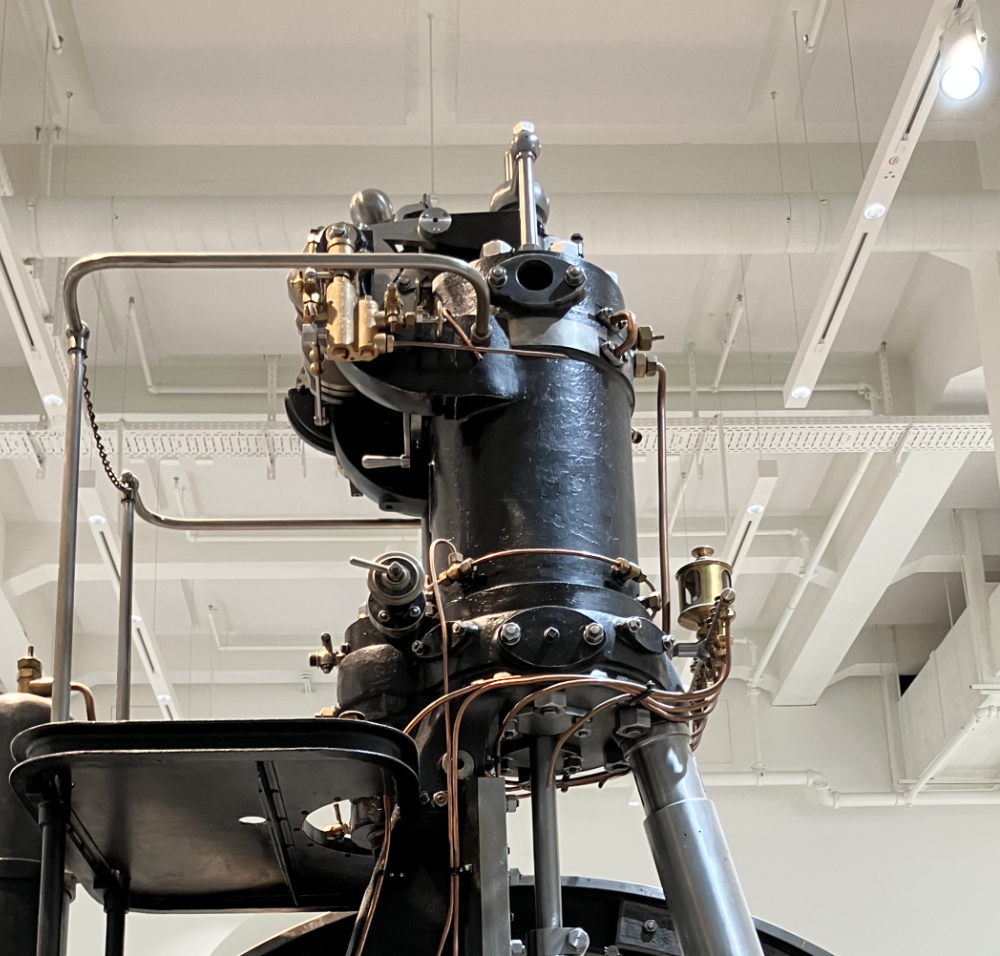

If the retrieved data is flawed or mismatched, the LLM will still confidently generate an output—because that’s what it’s designed to do. There’s no built-in mechanism for validation or correction. So RAG is stuck—it needs external data to improve accuracy, but it lacks the ability to judge whether that data makes sense in context. It’s like Diesel’s first engine, trying to run on steam infrastructure—it technically works, but not well enough to unlock its full potential.

This is why we created RUDS. RUDS isn’t just another AI model—it’s a new paradigm. Unlike RAG, which relies on surface-level similarity, RUDS creates a shared semantic framework where data isn’t just retrieved—it’s understood. RUDS enables AI to reason across structured and unstructured data by building a semantic layer that allows different systems to interpret and validate information based on context and intent. And while RUDS doesn’t need a vector database to operate, it can integrate one if needed—because it’s built to unify, not to patch.

But that’s not the biggest difference. RUDS also tackles the governance problem that RAG-based architectures ignore. RUDS integrates an expert system capable of applying rule-based validation to AI-generated insights. This means that AI outputs are not only more accurate—they are explainable, traceable, and operationally aligned. RUDS retains context over time, constantly updating its semantic framework so AI decisions aren’t made in isolation—they’re grounded in a dynamic, evolving understanding of the entire system.

RAG retrieves facts—RUDS understands meaning. This is why RUDS reduces technological debt rather than accumulating it. When you build AI on a semantic foundation, you’re not bolting intelligence onto outdated infrastructure—you’re laying the groundwork for AI-native ecosystems. Just as Diesel’s engine only reached its full potential when it broke free from steam infrastructure, AI will only reach its true potential when it moves beyond the brittle, API-based systems of today. That’s what RUDS enables.

If you want to know more, get in Touch.